Sometimes I can’t help but wonder whether data science as a discipline is still in the dark ages. From my vantage point as a practicing data scientist, I see that too many projects still rely on personal heroics for success. By and large, ours is still a discipline dominated by the need for stars and the attendant problems of relying on them for team success: work-life imbalance for the star, single point of failure for the project, wide variability in outcomes between projects, unrepeatable successes, etc.

This state of affairs is obviously undesirable and unsustainable. In a way, it reminds me of the early days of software engineering, which is documented instructively in classics like The Mythical Man-Month by Frederick P. Brooks Jr. Of course, software engineering has come a long way and agile practices, underpinned by well-accepted principles in the Agile Manifesto, have had an outsized impact on how modern companies develop software. I believe some of those agile practices have a role to play in how we conduct data science projects. This article describes how I think Kanban, an important agile tool I have been learning about, can be applied in the analytics life cycle.

So what is Kanban? In a nutshell, Kanban (a signboard in Japanese) in the context of software development is a simple system designed to achieve two objectives:

- Optimise the throughput of a software development team by controlling the number and nature of work-in-progress (WIP) items at all times.

- Introduce transparency and encourage focussed communication between stakeholders by providing a way to visualise the work to be done, including WIP and backlogs, and the workflow being used to achieve them.

The first objective is related to the Theory of Constraints (TOC) introduced by Goldratt in the book The Goal. The basic observation of TOC is that the maximal throughput that can be achieved in a production system is bounded by the throughput that can be achieved by the major constraint or weakest link in the system, and that all improvements to a production system can be organised around an iterative process to (1) identify the constraint; and (2) improve the throughput of the constraint by subordinating all other steps to the capacity of the constraint.

In the context of software development, the obvious constraint in the development process is the software developers, who must be protected from being overwhelmed by upstream (product managers, business owners) and downstream (software testers, release engineers, users) activities. Since a software developer’s productivity, in terms of throughput (the rate at which features/stories are completed), is shown to be inversely proportional to the number of WIP items the person is working on concurrently — yet another empirical proof that we are bad at multi-tasking — an obvious way to improve their throughput, and by extension the whole team’s throughput, is to limit the number of WIP items that are allowed in the development process at any one time. There are different ways to arrive at the WIP limit; a general rule of thumb is to limit WIP to X or 2X if there are X software developers in the team. Once such a WIP limit is set, all proposed and ongoing work are visualised and managed on a board (a Kanban) like this one

showing what’s in each stage of development at any one time. Each work item is represented as a sticky note containing key information about the item. As each work item is processed, the sticky note is moved manually across the board. Importantly, new work is only pulled into the system once a work item is finished and a slot frees up, and the selection of the new work item is typically done through a prioritisation process involving product managers and business owners. This pull system is exactly how the WIP limit is imposed.

The very simple and public nature of a Kanban allows all stakeholders to easily see all the WIP, including their status and potential blockers, as well as the backlog. Such a complete and transparent view of the development process can, in turn, encourage project stakeholders to move beyond personal agenda and start focussing on achieving results and doing what’s right for the team and the business. This is how Kanban achieves the second objective outlined above.

Kanban as a system is deceptively simple. The proof of the pudding, as they say, is in the eating. Kanban has been implemented in many different companies and many teams have reported significant boost in productivity, reduction in delivery lead-time, and improvement in predictability as a result of adopting the system. Some of these successes are documented in the book Kanban by David Anderson.

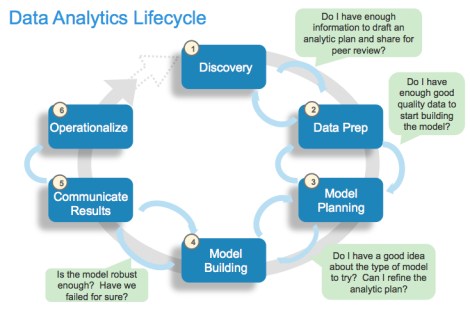

We are now ready to discuss possible applications of Kanban in data science projects. The following diagram shows the now familiar phases in the life cycle of a DS project.

In a typical DS project, the Model Planning and Model Building phases are the creative phases, where hypotheses are generated, tested with data or preliminary models, and the results fed back into the overall thinking process to generate yet more hypotheses. For a while at least, questions don’t beget answers but more and more questions. This necessary creative process, if not managed well, can lead to analysis paralysis, loss of project focus, overwhelmed staff, and ultimately project chaos and loss of trust among project stakeholders. I think this is where Kanban will be most useful in managing a DS project.

The key ingredients are certainly all in place.

- Each hypothesis is a work item that needs to be processed in multiple steps.

- There is a good supply of work items in the form of new and derived hypotheses that get generated throughout the creative process.

- We need to get through as many of the hypotheses as possible in the allotted time; that’s the throughput measure we want to optimise.

- The rate at which we can process hypotheses is constrained by the project data scientists.

A Kanban system, with its WIP-limited pull system and clear workflow visualisation, is exactly the management structure we need to control the creative process in the Model Planning and Building phases. It builds trust among stakeholders by making sure there is a constant and predictable flow of project deliveries in the form of processed hypotheses and related insights. It also introduces transparency into the backlog of work items and encourages focussed and open communication in the prioritisation process used to pick the next work-item whenever a slot frees up. Finally and importantly, by limiting the number of WIP hypotheses, Kanban maximises the productivity of project data scientists without placing undue stress on them. And Kanban achieves all that without having to place any process constraints that can impede the creativity of project data scientists, whose creative juices are of course what we need to drive innovation in the first place.

That brings me to one last point: unlike the use of Kanban in software development, where major decisions relating to what to work on next are made externally by product managers and business owners, the data scientists themselves must play a major role, in consultation with stakeholders, in populating the backlog and prioritising the flow of hypotheses and models to work on. At the end of the day, successful data science outcomes can only be achieved with an intimate knowledge of the interplay between business processes and goals, data, and the capabilities and limitations of statistical science. It is in that intersection that data scientists live and thrive.

The above outlines a case for using Kanban in a key part of the analytics life cycle. In practice, the design and implementation of an actual Kanban process has contextual nuances and I refer the reader to the excellent (if chatty) book Kanban: Successful Evolutionary Change for Your Technology Business by David J. Anderson for details.